We've Been Scared Before

Mar 8, 2026

From mainframes to spreadsheets to the internet to AI: same fear, different technology. A history of "this time, the machines win" and reaction to Anthropic's latest future-of-work research report.

––––––––––

I was deep in a call last week with a founder, helping her think through what roles to hire for in the next 3 months, when she asked the question I keep hearing: "Which of these jobs will just... not exist in two years?"

We sat with it for a moment. I gave her my honest answer, which was some version of "I genuinely don't know, and I'd be skeptical of anyone who claims they do." But the question didn't leave me... and I’ve been healthily stressed about this in the last few days, even more so when Anthropic came out with plug-in’s in Claude and OpenAI releasing GPT-5.4 this past week.

Then, on March 5th, Anthropic published Labor Market Impacts of AI: A New Measure and Early Evidence. I read it that evening and stayed up all night writing this essay, as something clicked. It forced me to zoom out, to ask a different question: not just "what will AI do to jobs?" but "have we been here before?"

We have. Several times, actually. And the record is worth looking at carefully before we write any more apocalyptic LinkedIn posts!

The First Great Panic: "Cybernation"

In 1961, TIME Magazine ran a cover story called "The Automation Jobless" with the subtitle "Not Fired, Just Not Hired." Nearly five-and-a-half million of Americans were unemployed, and the mainframe computer was public enemy number one. The article documented sector-by-sector decline with actual numbers: chemical industry jobs fell 3% while output shot up 27%; steel capacity rose 20% while the workforce shrank by 17,000. The argument wasn't just that automation was killing jobs. It was that automation was structurally preventing new ones from being created at all.

The initial panic was focused on blue-collar factory workers, the steel mill and auto plant employees whose physical work was being replaced by machinery. But what's less remembered is that by 1962, the fear had explicitly moved upward into the office. Donald N. Michael's influential pamphlet Cybernation: The Silent Conquest named specific casualties: bank tellers, retail clerks, and massive swaths of middle management would be entirely replaced by calculating machines.

This gave the anxiety real intellectual weight. In 1964, 34 prominent thinkers signed The Triple Revolution, an open memo to President Johnson. A Nobel laureate was on it, along with eminent economists. They declared that computers and automated machines would create "a system of almost unlimited productive capacity which requires progressively less human labor" and argued the "traditional link between jobs and incomes" was being broken. Their proposals got drastic fast: shorter work hours at the same pay, massive public works expansion, and early versions of a guaranteed basic income.

President Kennedy had already gone on record in a February 1962 press conference saying the country needed to find "25,000 new jobs every week" just to absorb displacement from machines. Norbert Wiener, the MIT mathematician who founded the field of cybernetics, had written as early as 1950 that automated machines were "the precise equivalent of slave labor" and that coming unemployment would make the Great Depression "seem a pleasant joke." By 1965, TIME was running yet another cover predicting a 20-hour workweek, and quoting forecasters who said "by 2000, the machines will be producing so much that everyone in the U.S. will, in effect, be independently wealthy."

The industrial psychologists of the era were equally alarmed. Studies and union documents from the 1960s described the psychological toll of human-machine interaction in factories as "overwhelming," citing fears that workers would find it "boring or demoralizing to be told by a machine to press a button." Unions pushed for and won what they called "automation clauses" in collective bargaining agreements, requiring employers to consult union representatives before new computational processes were installed. The “socio-technical systems” movement, a branch of organizational psychology, was born largely in response: it argued that any technology deployment had to simultaneously optimize both the machine system and the human one, or it would fail on both fronts.

So here’s what actually happened. President Johnson convened a National Commission on Technology, Automation, and Economic Progress in 1964. After extensive study, it issued a conclusion that reads as almost elegant in hindsight: "The basic fact is that technology eliminates jobs, not work." Mainframes lowered the cost of information processing, which created more demand for data analysis, tracking, and reporting, which triggered a service sector expansion that easily absorbed displaced labor. The bank tellers Michael named as doomed in 1962? Still employed.

The broader point, one that MIT labor economist David Autor quantified decades later, is that roughly 60% of U.S. workers today are in occupations that didn't even exist in 1940, with more than 85% of long-term employment growth derived directly from technology-driven job creation. The jobs being eliminated were always a fraction of the story.

Era Two: The Paperless Office and the Death of the Accountant

Fast forward to the PC era in the late 1970s and 1980s. Different technology, same fear, much more specific predictions.

On June 30, 1975, BusinessWeek published a cover story titled "The Office of the Future." Xerox PARC's director George Pake contributed a vision that was strikingly concrete: by 1990, the physical handling of paper would be virtually gone. At every desk, a display terminal. All correspondence electronic. And the workers who handled paper for a living, filing clerks, stenographers, and typists specifically, would be eliminated outright. The article popularized the term "paperless office" and treated it as a matter of when, not if. Pake also noted, almost as an aside, that the coming networked systems felt "kind of scary," that workers would feel "intensely interconnected" and "vulnerable to the machine." That parenthetical ended up being more prescient than the main thesis.

A few years later, the spreadsheet triggered its own version of the same panic. Apparently (I couldn’t find the primary source, but see here), a 1983 Fortune article predicted that "electronic spreadsheets and computer-based ledgers will sharply reduce demand for accounting clerks" and that computers would drastically cut the need for accountants overall. The logic was airtight on the surface: if a machine can calculate a ledger balance instantly and without error, why keep the human calculators? Similarly, Nobel laureate Wassily Leontief made what became the era's most-quoted prediction in a 1983 academic paper: "The role of humans as the most important factor of production is bound to diminish, in the same way that the role of horses in agricultural production was first diminished and then eliminated by the introduction of tractors." Any worker performing tasks by following specific instructions could, in principle, be replaced.

Photo: TIME Magazine Cover, The New Economy, May 30, 1983.

On the manufacturing side, the fears were materializing more visibly. TIME's 1983 "New Economy" cover reported that 211,000 autoworkers, 19% of the entire blue-collar auto workforce, had been indefinitely laid off due to robotics. Harvard's William Abernathy estimated Ford could only afford to keep half its 256,600 American employees to remain competitive with Japanese manufacturers. David Noble's Forces of Production (1984) added an important argument to this: the deployment of computer-numerical-control machine tools was driven not purely by technical efficiency but by management's desire to remove skilled machinists' control over production. The technology was being used as a power tool, not just a productivity one. Harry Braverman's Labor and Monopoly Capital (1974), which sold over 120,000 copies, had made the broader version of this argument: management systematically deskills workers, separating thinking from doing, and transfers knowledge into machines. It was cited everywhere.

The organizational psychologists had a field day. Electronic Performance Monitoring, or EPM, emerged as a major I/O psychology concern during this period. For the first time in history, the digitization of workflow allowed granular, continuous, automated tracking of white-collar employee output. Peer-reviewed research consistently linked EPM to heightened stress, loss of personal control, and a breakdown in organizational trust. It was called "the invisible eye." Meanwhile, computerization created a severe age-based divide: longitudinal studies showed that older workers who couldn't bridge the computer literacy gap faced significant increases in transitions out of work entirely, whether through early retirement or forced exit. The psychological burden of suddenly feeling incompetent in a role you had performed successfully for decades was a defining feature of 1980s workplace distress.

And then the outcomes arrived, and they were genuinely strange.

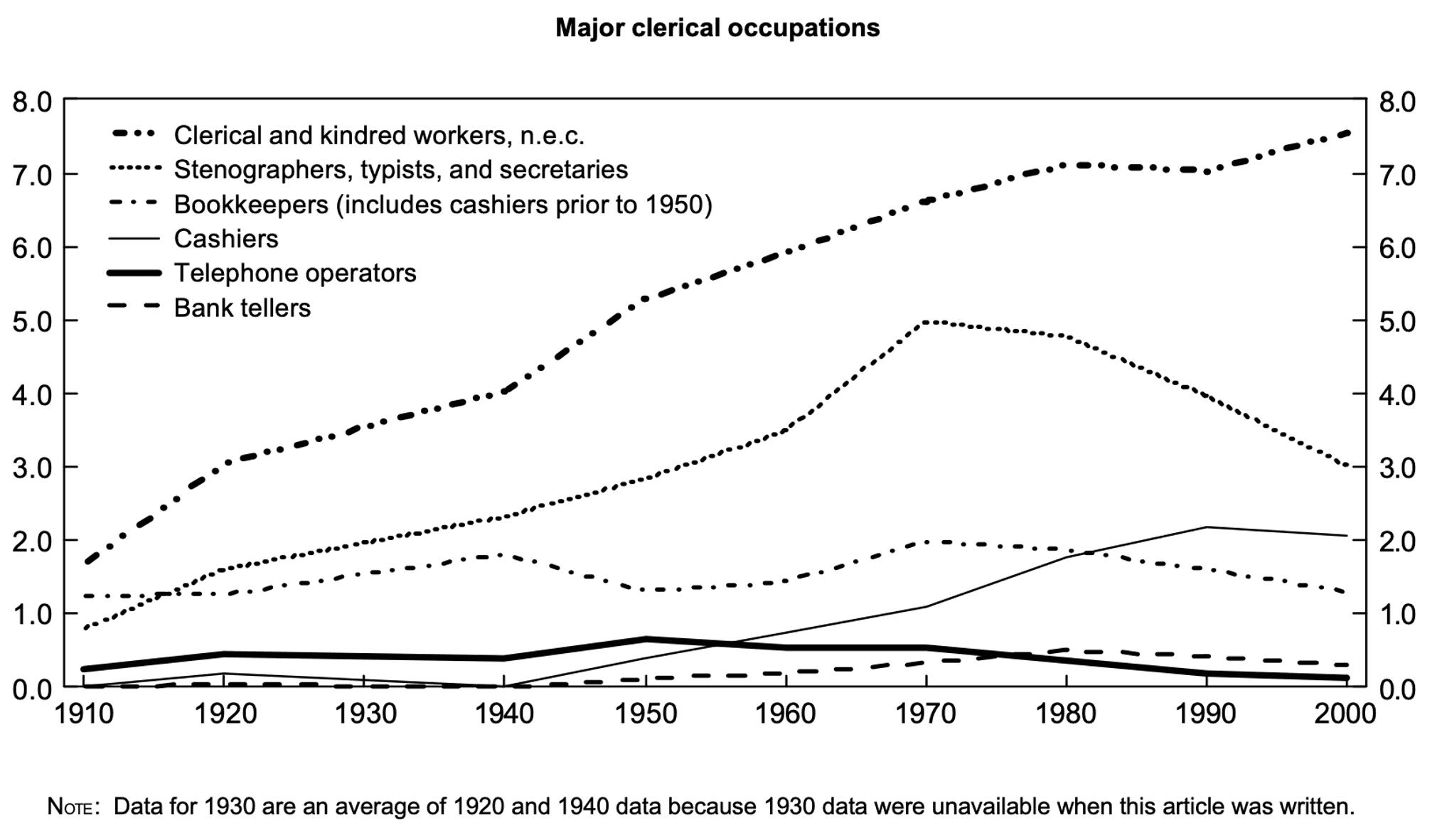

The paperless office prediction failed completely. Desktop printers made paper consumption increase globally through the 1990s. Psychological and ergonomic research revealed workers found physical paper better for complex cognitive tasks, document review, and spatial organization. The technology that was supposed to kill paper ended up generating more of it. For stenographers and basic typists, though, the prediction held: BLS occupational data shows those roles did decline sharply after 1970. The narrowest, most routine data-entry work was, in fact, vulnerable. What happened is that those workers largely transitioned into administrative assistant roles requiring higher-level organizational and interpersonal skills rather than disappearing from the workforce entirely.

From Chart 9 in Occupational changes during the 20th century. Bureau of Labor Statistics, Monthly Labor Review, March 2006.

Accountants, however, tell the opposite story. Rather than shrinking, electronic spreadsheets freed accountants from arithmetic and allowed them to run complex financial models, risk projections, and scenario analyses that were previously impossible to do manually. According to Bureau of Labor Statistics data, accounting and bookkeeping workers grew from 1.3 million in 1980 to 2.1 million by 1995, a 62% increase, during the precise period when spreadsheets were supposedly eliminating them. The task of arithmetic was automated. The occupation of accounting expanded into the space that automation opened.

Shoshana Zuboff's In the Age of the Smart Machine (1988), based on a multi-year ethnographic study across eight organizations, explained why outcomes varied so much: IT could either "automate" (replace effort) or "informate" (translate work into visible, shareable information that expands human judgment). The outcome depended almost entirely on how management chose to deploy the technology. This is not a technological observation. It is an organizational one.

Robert Solow captured the macro-level irony in a 1987 book review: "You can see the computer age everywhere but in the productivity statistics." Despite massive computing investment through the 1970s and 80s, productivity growth had dropped from roughly 2.9% to 1.1%. A 1990 paper put this in perspective by drawing a parallel to electric power: after the introduction of the dynamo in the 1880s, it took roughly 40 years for factory electrification to show up in measurable productivity gains, because exploiting a general-purpose technology requires fundamental reorganization of how everything works.

We keep forgetting this lag exists.

Era Three: Tellers, Travel Agents, and "Is Your Job Next?"

The internet era had two distinct displacement fears, and the outcomes were the most instructive of all three periods because for the first time, one of the major predictions was actually correct.

Federal Reserve symposium papers from the mid-1980s had already flagged bank tellers as a near-certain casualty of ATM proliferation and online banking. The logic was obvious: ATMs do what tellers do, so more ATMs mean fewer tellers. Between 1995 and 2010, ATMs quadrupled in the U.S. from roughly 100,000 to 400,000 units. Bank teller employment nonetheless rose from about 500,000 in 1980 to 550,000 by 2010. The proposed reason was that ATMs significantly reduced the operational cost of running a branch, which led banks to open more urban branches to capture market share. Fewer tellers per branch, but more branches overall. And the teller role itself evolved from cash-handling into relationship banking, where the actual work became cross-selling credit cards, auto loans, and investment products. Tasks requiring human interpersonal skill that machines couldn't touch.

Then the sequel arrived. Post-2010, mobile banking apps finally did what ATMs couldn't, and teller employment fell by roughly 30% into the 2020s. The prediction was right. It just took 30+ years to materialize, and it looked completely different than forecasters expected in the interim.

Travel agents are the other side of that coin. They're the era's confirmed casualty. Between 2000 and 2012, U.S. travel agent employment fell by about 50%. Two simultaneous shocks: airlines slashed agent commissions in 1995, then sites like Expedia and Travelocity automated the agent's core function of schedule aggregation and information retrieval entirely. Unlike bank tellers, travel agents had no “O-ring protection,” no adjacent human task that became more valuable once the routine work was automated. The technology substituted the whole job, not just a piece of it.

Jeremy Rifkin's The End of Work (1995), which became an international bestseller, predicted the wholesale wipeout of millions of jobs across agriculture, manufacturing, and the service sector, and advocated for a mandated 30-hour workweek and a massive government shift of the population into voluntary community organizations to absorb the displaced. It was persuasive enough to be taken seriously everywhere. In 2003, BusinessWeek ran a cover story titled "Is Your Job Next?" documenting white-collar job flows to India and China. Forrester Research predicted in 2002 that 3.3 million U.S. white-collar service jobs and $136 billion in wages would move offshore by 2015, with IT leading the exodus. The Forrester number became one of the most-cited statistics in the entire offshoring debate. It drove policy conversations. It never materialized as predicted, and most of the jobs identified as vulnerable maintained healthy employment growth.

The internet era also introduced organizational psychology concepts that now feel eerily familiar.

"Survivor syndrome" was formally studied during this period: the distinct psychological distress and guilt experienced by employees who remained after colleagues were displaced by technological restructuring.

And "telepressure," the compulsive urge to respond immediately to work communications regardless of hour or location, became a clinical construct as laptops and cell phones dissolved the boundary between work and home.

Neither of these was a prediction about which jobs would vanish. Both were accurate descriptions of how the surviving workers felt while watching the predictions play out around them.

There is one more era worth naming before our current one.. In 2013, Oxford researchers Carl Benedikt Frey and Michael Osborne published The Future of Employment, a peer-reviewed study analyzing 702 occupations that concluded 47% of U.S. jobs were at high risk of computerization within "a decade or two."

It became the defining quantitative claim of the pre-ChatGPT era, probably the most-cited academic study on job displacement in a generation. The specific occupations flagged as most vulnerable included telemarketers, loan officers, cashiers, paralegals, and retail salespersons, or roles defined primarily by routine information processing and structured decision-making. A decade-plus on, most of those jobs maintained healthy employment growth.

The 47% never materialized as predicted. Notably, when the Anthropic report opened by observing that "a prominent attempt to measure job... vulnerability identified roughly a quarter of US jobs as at risk, but a decade on, most of those jobs maintained healthy employment growth," they probably are gesturing at the Oxford researchers.

Era Four (or Five? Six?): Anthropic’s Report

This is the backdrop against which Anthropic's hot-off-the-press report lands. They open with exactly this humility: "The track record of past approaches gives reason for humility." I don’t think they are promising the future of work, but rather, building a better measurement tool before the effects are clear, so that future analysis is more reliable than the post-hoc rationalizations we've been doing for 70 years.

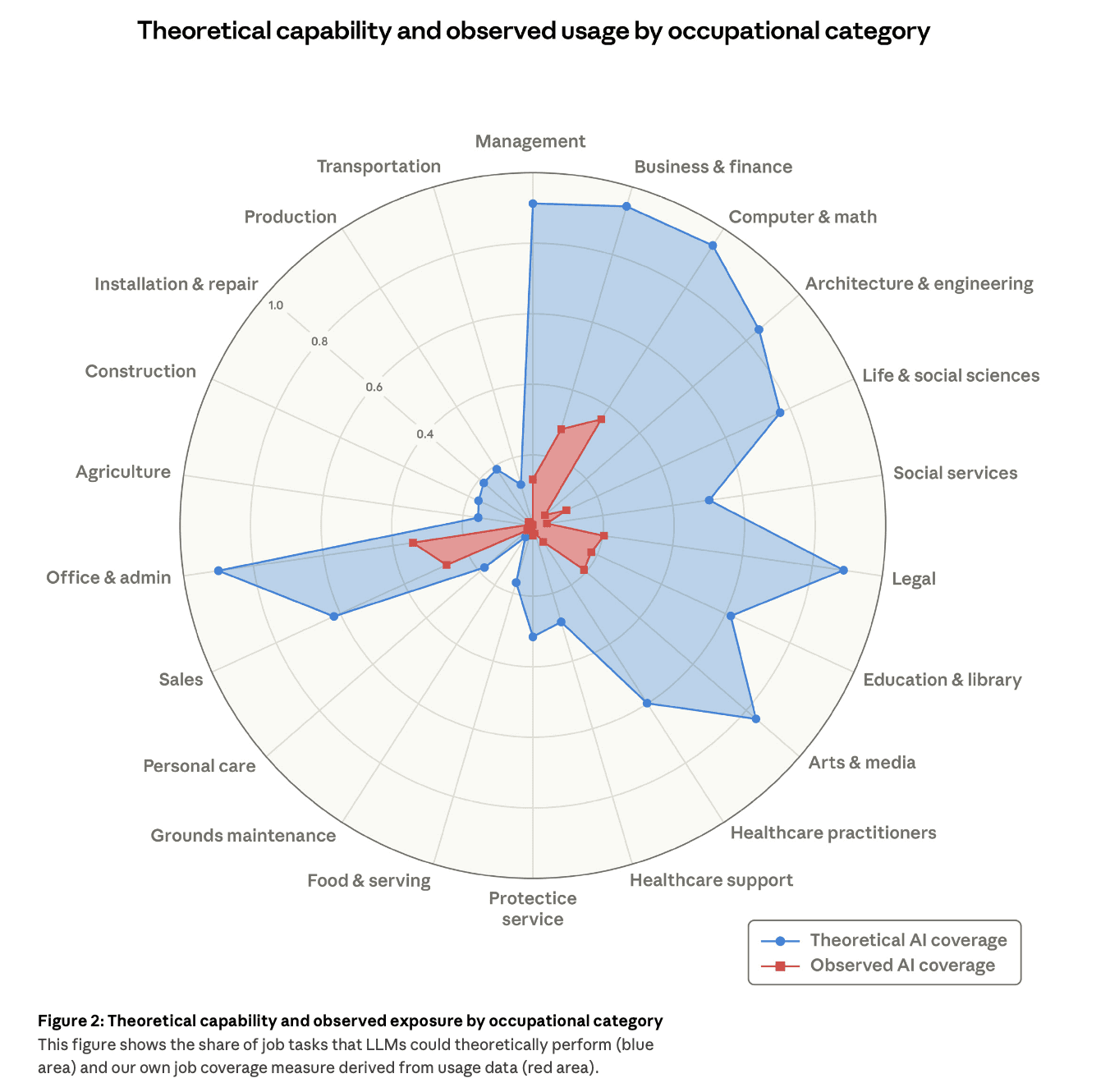

Their core innovation is a metric they call "observed exposure," which combines what AI is theoretically capable of with what AI is actually being used for in professional settings. One note is that the latter is being drawn from Anthropic's own usage data.

The gap between those two things is the most important number in the report. Figure 2 shows it visually: a radar chart where the blue area, representing what LLMs could theoretically do across occupational categories, dramatically overshoots the red area, representing what Claude is actually observed doing in professional contexts. AI could theoretically handle 94% of tasks in Computer and Math roles. In practice, Claude is covering around ~33% of those tasks right now. Office and Admin shows 90% theoretical exposure.

Observed reality is a fraction of that. In other words, at the given moement in time, per Anthropic, the capability exists on paper, but the deployment hasn't come anywhere close to catching up... yet.

Figure 2 in Labor market impacts of AI: A new measure and early evidence. Anthropic. March 5, 2026.

Figure 3 lists the ten most exposed occupations by this measure. Computer programmers sit at the top with 74.5% observed coverage. Customer service representatives come in at 70.1%. Data entry keyers at 67.1%. Market research analysts at 64.8%. Financial and investment analysts at 57.2%.

When I read that list, the historical echo was immediate. Data entry keyers are the 2020s version of the 1975 typists and filing clerks that BusinessWeek declared would be gone by 1990. The prediction was right, it just took about 50 years. Customer service representatives are the bank tellers of the internet era: middlemen whose core function of relaying and processing information is now being automated at the task level, though the question of what adjacent human work becomes more valuable remains open. Financial and investment analysts are the 1983 accountants, the ones Fortune said spreadsheets would eliminate, except this time the tasks being automated are more cognitive than arithmetic. The threat profile has genuinely escalated, and I think this AI era is different. The agents are autonomous. We’re building towards superintelligence. The advancement of technology right now is much different than the previous eras.

And then there's computer programmers at 74.5%. This is historically unprecedented and genuinely ironic. In every prior wave, the people building the technology were the safe ones. The programmers and engineers were the beneficiaries, not the casualties. The Anthropic data flips this completely. If you study the pattern across 70 years of automation anxiety, you see the threat has moved steadily upward through the skill and credential hierarchy, from factory floors to filing clerks to accountants to IT workers, and now to the people writing the code itself. That's a different kind of prediction than anything prior eras were making.

At the other end of the exposure spectrum, the Anthropic report finds zero coverage for cooks, motorcycle mechanics, lifeguards, bartenders, and dishwashers. These are jobs that might have flagged as obviously vulnerable half a century ago because they can be described as physically repetitive. Sixty years later, they register no AI exposure whatsoever. At least for now, physical, context-dependent, and spatially grounded labor has consistently survived every wave of automation anxiety, but that may be about to change with the rise of research exploring the physical intelligence space, which I write about in my last essay, My Bet on Physical Intelligence.

The demographic finding in the report is worth its own paragraph because it completely inverts the historical narrative. Workers in the most AI-exposed occupations tend to be older, more educated, and significantly higher-paid, with graduate degree holders representing nearly 17% of the high-exposure group versus only 4.5% of the zero-exposure group, an almost fourfold difference. The most exposed workers earn 47% more on average than the least exposed. This is not the robots-stealing-factory-jobs story that defined the 1960s, nor is it the paperless-office-eliminating-clerks story from the 1980s. The people most at risk from AI exposure, at least by this measure, are knowledge workers with advanced credentials who have historically been the most insulated from prior waves.

That reframes the policy question in ways that haven't fully landed in mainstream coverage yet.

A recent MIT Sloan study tracking actual AI adoption within firms from 2010 to 2023 adds useful texture here. When AI automates only a subset of tasks within a role, employment in that role actually grows by approximately 3% over five years. When AI can perform the vast majority of tasks within a job, employment falls by roughly 14%. The Anthropic report's observed exposure gap, the wide distance between what AI can theoretically do and what it's actually doing, matters because it suggests most occupations are still in the "subset" zone, where the O-ring effect and complementarity tend to dominate. The moment that gap closes significantly for any given role is when the dynamic starts to change.

And the headline finding: there is no statistically significant increase in unemployment among the most AI-exposed workers since ChatGPT launched in late 2022. The unemployment trends for high-exposure and low-exposure workers have been essentially parallel. One signal worth watching: job-finding rates for workers aged 22 to 25 entering AI-exposed occupations have dropped roughly 14% in the post-ChatGPT period, just barely crossing the threshold of statistical significance. (Interestingly, IBM came out publicly in February 2026 that the company would 3X the number of entry-level jobs because of AI.)

The report put a stat to what has been the discourse amongst this age group, as I’m 25 as of writing this essay. Young people trying to enter these fields, those ‘knowledge worker’ roles, are finding fewer open doors. While it’s not the same as mass unemployment, it's not nothing either.

Introducing Artificial Intelligence Replacement Distress (AIRD)

In just 48 hours, I’ve spent a lot of time reading research about job displacement because of technological shifts. But over the last few years, including studying organizational development in college, I’ve come to understand different perspective on how people actually experience work, rather than how economists or Nobel Laureates model it from 30,000 feet. And one thing that keeps nagging me about displacement debates across all these eras is how consistently the human experience at the task level gets underweighted.

Robert Karasek's 1979 research on job demands and control identified something important: mental strain comes not just from high-demand work but from high-demand work combined with low control over how you do it. The introduction of AI that removes tasks without giving workers meaningful new ownership of their roles, without expanding their judgment or their autonomy, could make psychological strain measurably worse for the people who remain even if aggregate employment looks fine. Zuboff's “automating” versus “informating” framework maps directly onto this. If AI is deployed as a monitoring and constraining tool, it deskills and alienates. If it's deployed to surface information and expand what a worker can reason about, it does the opposite.

Modern I/O psychology has a name for the specific anxiety AI is producing: Artificial Intelligence Replacement Distress (AIRD). Unlike the demoralization of the 1960s, which was rooted in fears of physical idleness, or the technostress of the 1980s, which was about surveillance and cognitive overload, AIRD represents something closer to an epistemological threat. For the first time, technology isn't just replacing physical strength or arithmetic speed. We’re seeing now that it simulates advanced cognition, language, and analysis at near-human or better-than-human levels. That threatens not just the job, but also the professional identity and the perceived intellectual value of the knowledge worker. Research using Latent Profile Analysis has found a paradoxical twist: people with high career adaptability and competence often experience more AI anxiety, not less, because they can accurately assess what AI is capable of and how it maps onto their own work.

What the historical record shows is that this anxiety, while real and worth taking seriously, has consistently not produced the outcomes it predicted. The mechanism that keeps intervening is what economists call the O-ring production function: when automation makes one step in a chain of tasks cheaper and more reliable, it tends to raise the economic value of the remaining human-operated steps rather than eliminating the chain entirely. The accountant whose arithmetic became automated became more valuable as a modeler. The bank teller freed from cash-handling became more valuable as a relationship manager.

Whether AI represents a qualitative break from that pattern, because it's moving into cognitive and linguistic territory that was previously the exclusive domain of human labor, is the real question. I sway both ways pretty much every other hour. So the honest answer is that I, or we, don't know yet.

What I, or We, Also Still Don't Know

We don’t know whether the 22-to-25 hiring slowdown in AI-exposed occupations is early signal or statistical noise. Anthropic’s paper itself says it's barely significant and carries multiple alternative explanations. But I think Gen Z will stay resilient, and our career adaptability is very different from the generations above us.

We don't know whether the gap between theoretical AI capability and observed deployment closes quickly or slowly. Every prior wave closed slowly. But AI capability curves have been consistently surprising for three years straight. I'm not confident historical timelines map cleanly onto the current moment, nor should we map them to status quo at all. However, these past eras are good ways to learn about our reaction to change, in addition to observing how the change impacted us.

We don't know which of the high-exposure occupations will develop new adjacent roles the way bank tellers evolved into relationship managers, and which ones will follow the travel agent path: a clean technological substitution with nowhere left to go. While I do think some, if not many, traditional, modern jobs will be displaced, I believe there will be new, or modified, roles because of AI where a human still needs to be in the loop.

What I do think I know: we should take findings and reports on the future of work with a grain of salt. While there were some logical assumptions and accurate predictions from the previous eras, there were still prophecies that ended up not becoming reality. While Anthropic says it found “limited evidence” of AI employment effects to date, that doesn’t mean there could be impacts in the future... good or bad (both are subjective based on your point of view).

We should all still keep track. Stay up to date on what’s going on with AI, its impacts on everyday life and at the aerial view. Stay true to what you want out of your work, or career: the skills you learn and strengthen, people (or AI agents) you work with, the functions or industries you continue to explore.

Or, just go 100% into agriculture or grounds maintenance. I’m open to tending to a lavender field in rural France and trimming the boxwood bushes around the ancient, petit chateau I end up fixing up, if AI takes over the world.